Cloud Based Testbed Manager (CBTM)

What is CBTM?

CBTM is a software responsible for creating and destroying Virtual Machines (VMs) on remote hosts. This software was written using the Python programming language and was developed and originally distributed by Trinity College Dublin (TCD) in Ireland.

Besides handling VMs, it’s also responsible for allocating resources for them, for example, Universal Software Radio Peripheral (USRPs), and other network available resources.

CBTM must run on a Server (we will call the computer running CBTM VMs Server from now on), and this server must be connected to the VM Host Computers via a network switch.

For the CBTM to work properly, a few conditions must be met:

- Kernel-based Virtual Machine (KVM)/libvirt must be installed on every host machine.

- The host machines should be connected to the same subnet as the CBTM Server.

- The CBTM must know the IPs of every host machine.

- The VMs Server must have access to the host machines via SSH (the VM Server’s SSH key must be copied to every host machine)

- The VMs base images must be copied to every host’s image pool.

- The machine defining and the volume defining XML files must be stored somewhere in the VMServer, and their location must be known by the CBTM.

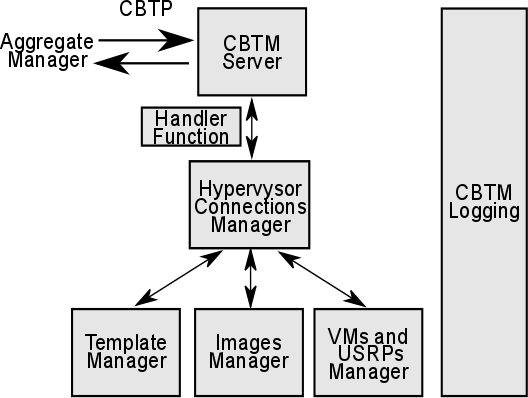

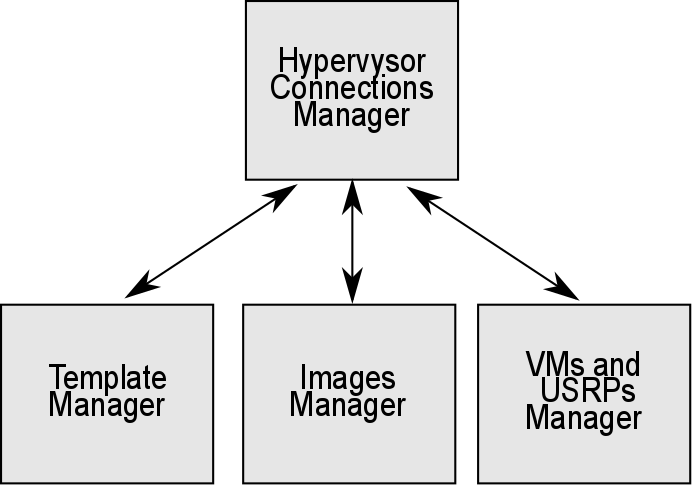

The internal structure of the CBTM is represented in Figure 1. The figure structure can be split into a back-end, depicted in Figure 2, which interfaces with the machine, and a front-end (which interfaces with the Aggregate Manager (AM)). The back-end is composed of three modules: Template Manager, which controls the XML files and the defined VMs statuses; the Images Manager, which controls the VMs images, such as their disks; and the VMs and USRPs Manager, which controls the available resources in the testbeds, allocating VMs and USRPs to available host machines.

The CBTM is divided into two parts: the first is the CBTM Server, which actually manages the VM, and the CBTM Client, which connects to the Server, issuing commands from the user. The interface from the AM/Coordinator to the CBTM is the same used by the Client. The following commands are supported by the CBTM Client:

- Request unit (ru):

- Receives four arguments.

- The ID of the USRP to be used.

- The type of image to be used.

- The username to be created inside VM.

- The SSH key of the user. To be put inside VM for login.

- Returns the IP of the freshly created VM.

- Receives four arguments.

- Release unit (rl):

- Receives one argument.

- The ID of the USRP to be released.

- Destroy and undefined the requested VM, if it exists.

- Receives one argument.

- Grid status (gs):

- Receives no arguments.

- Prints the IDs of USRPs in use.

- Unit status (us):

- Receives one argument.

- The ID of the USRP corresponding to the VM to be checked.

- Prints:

- free: if no VM is defined on that host.

- IP: if a VM is defined and turned on, prints its IP.

- 0.0.0.0: If a VM is defined and shut down.

- Receives no arguments.

- Start unit (su):

- Receives one argument.

- The ID of the USRP.

- Starts the requested VM.

- Receives one argument.

- Shutdown unit (sdu):

- Receives one argument.

- The ID of the USRP.

- Shuts down the required VM.

- Receives one argument.

- Restart unit (rbu):

- Receives one argument.

- The ID of the USRP.

- Restarts the required unit.

- Receives one argument.

- Set port (sp):

- Receives one argument.

- The desired port.

- Changes the port used by the server to the one passed as a parameter.

- Receives one argument.

- Set host (sh):

- Receives one argument.

- The IP of the new host.

- Changes the IP of the Server to which the CBTM Client will connect to.

- Receives one argument.

- Quit (q):

- Receives no arguments.

- Quits the CBTM Client.

Figure 1: CBTM Overal Structure

Figure 2: CBTM Backend

- What is it based on?

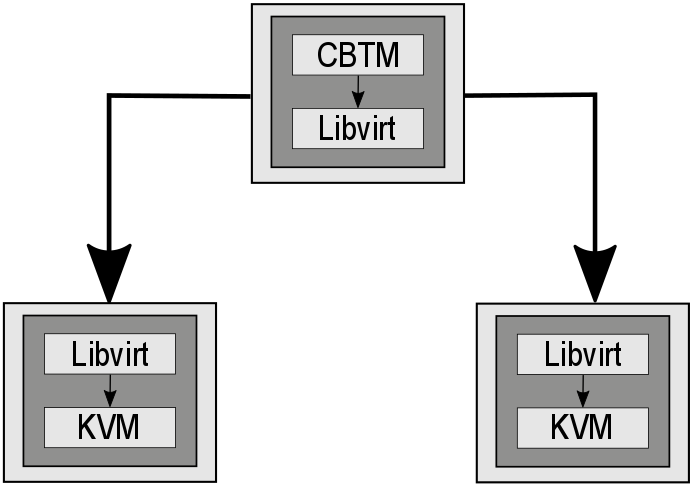

CBTM relies on KVM using libvirt as an API. The libvirt python API provides python functions for creating and managing VMs on remote hosts.

“KVM is a full virtualization solution for Linux on x86 hardware containing virtualization extensions (Intel VT or AMD-V). It consists of a loadable kernel module, kvm.ko, that provides the core virtualization infrastructure and a processor specific module, kvm-intel.ko or kvm-amd.ko.

Using KVM, one can run multiple VMs running unmodified Linux or Windows images. Each VM has private virtualized hardware: a network card, disk, graphics adapter, etc.” 1

“Libvirt is a collection of software that provides a convenient way to manage VMs and other virtualization functionality, such as storage and network interface management. These software pieces include an API library, a daemon (libvirtd), and a command line utility (virsh).” 2

- How does it integrate into the testbed infrastructure?

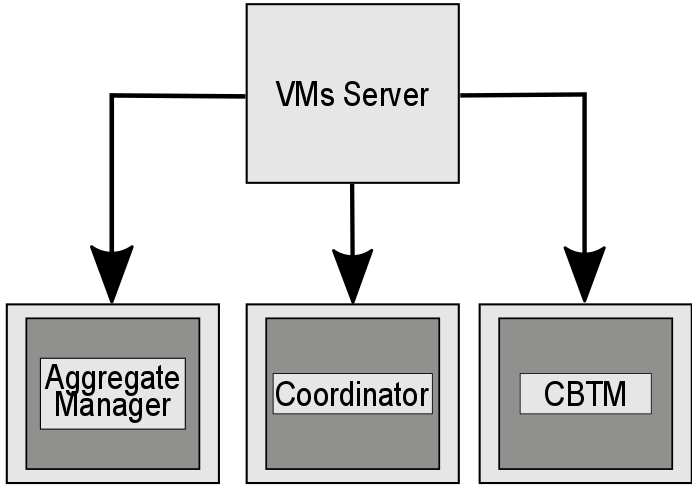

The CBTM is the backend software in the testbed infrastructure. It directly connects to the libvirt API running on the VMs Hosts. It receives commands from the Coordinator, which itself receives commands from the AM.

Figure 3 and 4 shows how the software integrates into the infrastructure.

Figure 3: CBTM connecting to libvirt on the remote hosts

Figure 4: VMServer running the AM, previously of Coordinator, before the CBTM]

- Differences among the FUTEBOL testbed from TCD and UFRGS

CBTM was originally developed, deployed and distributed by the TCD FUTEBOL group. Owing to the infrastructure differences of each testbed, a few modifications were necessary for the original CBTM software to manage and federate the resources at FUTEBOL Brazil/UFRGS testbed.

- Availability and usage of resources in each FUTEBOL testbed

At TCD, the USRPs are connected to the hosts via Ethernet cable, while, the USRPs are connected via USB3.0 at UFRGS. In this sense, there are required some modifications to pass the USB controller to the VM, so that the experimenter user could have access to the USRP resource. That implied a series of modifications to the source code and adaptations of base images of VMs and XML files.